Seminar

Temporal Modeling and Data Synthesis for Visual Understanding

Abstract: In this talk, I will present two recent pieces of work on leveraging temporal information and synthetic data to enhance video and image understanding. In the first part, I will introduce a progressive learning framework, Spatio-TEmporalProgressive (STEP), for action detection in videos. STEP is able to more effectively make use of longer temporal information, [...]

Multiple Drone Vision and Cinematography

Abstract: The aim of drone cinematography is to develop innovative intelligent single- and multiple-drone platforms for media production to cover outdoor events (e.g., sports) that are typically distributed over large expanses, ranging, for example, from a stadium to an entire city. The drone or drone team, to be managed by the production director and his/her [...]

Modeling, Design, and Analysis for Intelligent Vehicles: Intersection Management, Security-Aware Design, and Automotive Design Automation

Abstract: Advanced Driver Assistance Systems (ADAS), autonomous functions, and connected applications bring a revolution to automotive systems and software. In this talk, several research topics in the domain of automotive systems and software will be introduced: (1) graph-based modeling, scheduling, and verification for intersection management, (2) security-aware design and analysis considering timing, game theory, and [...]

VR facial animation via multiview image translation

Abstract: A key promise of Virtual Reality (VR) is the possibility of remote social interaction that is more immersive than any prior telecommunication media. However, existing social VR experiences are mediated by inauthentic digital representations of the user (i.e., stylized avatars). These stylized representations have limited the adoption of social VR applications in precisely those [...]

Neural Volumes: Learning Dynamic Renderable Volumes from Images

Abstract: Modeling and rendering of dynamic scenes is challenging, as natural scenes often contain complex phenomena such as thin structures, evolving topology, translucency, scattering, occlusion, and biological motion. Mesh-based reconstruction and tracking often fail in these cases, and other approaches (e.g., light field video) typically rely on constrained viewing conditions, which limit interactivity. We [...]

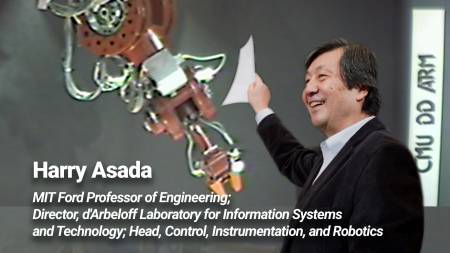

RI40 Seminar: From Direct-Drive to SuperLimb Bionics

In 1980-81 the first Direct-Drive robot was developed at the CMU Robotics Institute. After almost 40 years, Direct-Drive has a renewed interest in the leg robotics community. Robotic legs powered by direct-drive or low gear-reduction motors can better interact with the ground and absorb impacts. In this seminar I will talk about robot design in [...]

Tartan AUV: A Dive into Carnegie Mellon’s RoboSub Team

Abstract: Founded last year, Tartan AUV is Carnegie Mellon’s undergraduate underwater robotics team which competes annually in the RoboSub competition. RoboSub teams must design, build, and test autonomous underwater vehicles that compete each August to complete tasks related to underwater navigation, object detection and manipulation, and acoustic beacon localization. In this talk we will provide [...]

DNA and gammaPNA in programmable nanomaterials for sensing, robotics and manufacturing

Abstract: When programmable nanomaterials are used in conjunction with rapid microfabrication techniques like two photon polymerization, it becomes possible to rapidly prototype microstructures with nanoscale components. In this research presentation I introduce DNA nanotechnology using a commonly used simple nanotube motif, and I will illustrate how nucleic acid nanotubes can be used in sensing, robotics [...]

Towards Lightweight Real-time Hand Reconstruction in Challenging

Abstract: Humans naturally use their hands to interact and communicate with their surroundings. Reconstructing these complex and dexterous hand interactions enables sign-language recognition and translation, better assistive robots, and more immersive human-computer interaction (e.g. for AR and VR). To make hand reconstruction usable for the aforementioned applications and to a wide set of users, the [...]

Hybrid Methods for the Integration of Heterogeneous Multimodal Biomedical Data

Abstract: The prevalence of smartphones and wearable devices for health monitoring and widespread use of electronic health records have led to a surge in heterogeneous multimodal healthcare data, collected at an unprecedented scale. My research focuses on developing machine learning techniques that learn salient representations of multimodal, heterogeneous data for biomedical predictive models. The first [...]

The Robots are Coming – to your Farm! AKA: Autonomous and Intelligent Robots in Unstructured Field Environments

Abstract: What if a team of collaborative autonomous robots grew your food for you? In this talk, I will discuss some key advances in robotics, machine learning, and autonomy that will one day enable teams of small robots to grow food for you in your backyard in a fundamentally more sustainable way than modern mega-farms! [...]

Self-Driving Cars & AI: Transforming our Cities and our Lives

Abstract: Recent algorithmic and hardware improvements resulted in several success stories in the field of Artificial Intelligence (AI) which impact our daily lives. However, despite its ubiquity, AI is only just starting to make advances in what may arguably have the largest societal impact thus far, the nascent field of autonomous driving. At Uber ATG, [...]

Improving Robot and Deep Reinforcement Learning via Quality Diversity and Open-Ended Algorithms

Abstract: Quality Diversity (QD) algorithms are those that seek to produce a diverse set of high-performing solutions to problems. I will describe them and a number of their positive attributes. I will then summarize our Nature paper on how they, when combined with Bayesian Optimization, produce a learning algorithm that enables robots, after being damaged, to adapt in 1-2 minutes [...]

Go, fastMRI, and Minecraft: Exploring the limits of AI

Abstract: The application of AI across various domains demonstrates both the promise of existing techniques but also their limitations. In this talk, I explore three recent projects and how they shed light on the progress of AI and the challenges to come. These projects include ELF OpenGo a reimplementation of AlphaZero, fastMRI for reducing the time [...]

Towards Weakly-Supervised Visual Understanding

Abstract: Learning with weak and self-supervisions recently emerged as compelling tools towards leveraging vast amounts of unlabeled or partially-labeled data. In this talk, I will present some of the latest advances in weakly-supervised visual scene understanding from NVIDIA. Specifically, I will summarize and discuss some challenges and potential solutions in weakly-supervised learning, and introduce our [...]

Imaging without focusing: A computational approach to miniaturizing cameras

Abstract: Miniaturization of cameras is key to enabling new applications in areas such as connected devices, wearables, implantable medical devices, in vivo microscopy, and micro-robotics. Recently, lenses were identified as the main bottleneck in miniaturization of cameras. Standard smaller lens-system camera modules have a thickness of about 10 mm or higher, and reducing the size [...]

Towards photo-realistic face digitization from monocular videos

Abstract: Recent advances in face capture now enable digitizing high-quality 3D faces for the entertainment industry. Standardized digitization solutions, however, require tailor-made capture systems and extensive manual work, making them expensive and hard to deploy. With the advent of commodity sensors, new lightweight approaches that push the boundaries of human digitization have been introduced, slowly [...]

Toward telelocomotion: human sensorimotor control of contact-rich robot dynamics

Abstract: Human interaction with the physical world is increasingly mediated by automation -- planes assist pilots, cars assist drivers, and robots assist surgeons. Such semi-autonomous machines will eventually pervade our world, doing dull and dirty work, assisting the elderly and disabled, and responding to disasters. Recent results (e.g. from the DARPA Robotics Challenge) demonstrate that, [...]

Formal Synthesis for Robots

Abstract: In this talk I will describe how formal methods such as synthesis – automatically creating a system from a formal specification – can be leveraged to design robots, explain and provide guarantees for their behavior, and even identify skills they might be missing. I will discuss the benefits and challenges of synthesis techniques and [...]

Reconstructing 3D Human Avatars from Monocular Images

Abstract: Statistical 3D human body models have helped us to better understand human shape and motion and already enabled exciting new applications. However, if we want to learn detailed, personalized, and clothed models of human shape, motion, and dynamics, we require new approaches that learn from ubiquitous data such as plain RGB-images and video. I [...]

Extreme Motions in Biological and Engineered Systems

Abstract: Dr. Temel’s work mainly focuses on understanding the dynamics and energetics of extreme motions in small-scale natural and synthetic systems. Small-scale biological systems achieve extraordinary accelerations, speeds, and forces that can be repeated with minimal costs throughout the life of the organism. Zeynep uses analytical and computational models as well as physical prototypes to learn about these systems, test [...]

Reasoning about complex media from weak multi-modal supervision

Abstract: In a world of abundant information targeting multiple senses, and increasingly powerful media, we need new mechanisms to model content. Techniques for representing individual channels, such as visual data or textual data, have greatly improved, and some techniques exist to model the relationship between channels that are “mirror images” of each other and contain [...]

CANCELLED

Building Trust in Real World Applications of Vision Based Machine Learning

Abstract: In all machine learning problems, there is an explicit trade off between cost and benefit. In real world vision problems, this optimization becomes increasingly difficult since those trade offs directly impact technology and product development as well as business strategy. For any successful business case, it is critical that the cost/benefit trade offs in [...]

Knowledge Infused Deep Learning

Abstract: This talk is motivated by the following thesis: Background knowledge is key to intelligent decision making. While deep learning methods have made significant strides over the last few years, they often lack the context in which they operate. Knowledge Graphs (and more generally multi-relational graphs) provide a flexible framework to capture and represent knowledge [...]

Yes, That’s a Robot in Your Grocery Store. Now what?

Abstract: Retail stores are becoming ground zero for indoor robotics. Fleet of different robots have to coexist with each others and humans every day, navigating safely, coordinating missions, and interacting appropriately with people, all at large scale. For us roboticists, stores are giant labs where we're learning what doesn't work and iterating. If we get [...]

Learning to Reconstruct 3D Humans

Abstract: Recent advances in 2D perception have led to very successful systems, able to estimate the 2D pose of humans with impressive robustness. However, our interactions with the world are fundamentally 3D, so to be able to understand, explain and predict these interactions, it is crucial to reconstruct people in 3D. In this talk, I [...]

CANCELLED

Abstract: Before learning robots can be deployed in the real world, it is critical that probabilistic guarantees can be made about the safety and performance of such systems. In recent years, safe reinforcement learning algorithms have enjoyed success in application areas with high-quality models and plentiful data, but robotics remains a challenging domain for scaling [...]

Deep Learning for Understanding Dynamic Visual Data

Abstract: Perceiving dynamic environments from visual inputs allows autonomous agents to understand and interact with the world and is a core topic in Artificial Intelligence. The success of deep learning motivates us to apply deep learning techniques to the perception of dynamic visual data. However, how to design and apply deep neural networks to effectively [...]

Optimizing for coordination with people

https://youtu.be/AQ-w5o2oGI8 Abstract: From autonomous cars to quadrotors to mobile manipulators, robots need to co-exist and even collaborate with humans. In this talk, we will explore how our formalism for decision making needs to change to account for this interaction, and dig our heels into the subtleties of modeling human behavior -- sometimes strategic, often irrational, [...]

Analyzing Grasp Contact via Thermal Imaging

Abstract: Grasping and manipulating objects is an important human skill. Because contact between hand and object is fundamental to grasping, measuring it can lead to important insights. However, observing contact through external sensors is challenging because of occlusion and the complexity of the human hand. I will discuss the use of thermal cameras to capture [...]

Fast Foveation for LIDARs, Projectors and Cameras

Abstract: Most cameras today capture images without considering scene content. In contrast, animal eyes have fast mechanical movements that control how the scene is imaged in detail by the fovea, where visual acuity is highest. This concentrates computational (i.e. neuronal) resources in places where they are most needed. The prevalence of foveation, and the wide [...]

Learning to See Through Occlusions and Obstructions

Virtual VASC: https://cmu.zoom.us/j/249106600 Abstract: Photography allows us to capture and share memorable moments of our lives. However, 2D images appear flat due to the lack of depth perception and may suffer from poor imaging conditions such as taking photos through reflecting or occluding elements. In this talk, I will present our recent efforts to [...]

Detectron2 in Object Detection Research

Virtual VASC: https://cmu.zoom.us/j/249106600 Abstract: Detectron2 is Facebook's library for object detection and segmentation. It has been used widely in FAIR's research and Facebook's products. This talk will introduce detectron2 with a focus on its use in object detection research, including the lessons we learned from building it, as well as the new research enabled [...]

Fairness in visual recognition

Virtual VASC Seminar: https://cmu.zoom.us/j/249106600 Abstract: Computer vision models trained on unparalleled amounts of data hold promise for making impartial, well-informed decisions in a variety of applications. However, more and more historical societal biases are making their way into these seemingly innocuous systems. Visual recognition models have exhibited bias by inappropriately correlating age, gender, sexual [...]

Bio-inspired depth sensing using computational optics

Virtual Seminar: https://cmu.zoom.us/j/249106600 Abstract: Jumping spiders rely on accurate depth perception for predation and navigation. They accomplish depth perception, despite their tiny brains, by using specialized optics. Each principal eye includes a multitiered retina that simultaneously receives multiple images with different amounts of defocus, and distance is decoded from these images with seemingly little [...]

Task-specific Vision DNN Models and Their Relation for Explaining Different Areas of the Visual Cortex

Virtual VASC Seminar: https://cmu.zoom.us/j/249106600 Abstract: Deep Neural Networks (DNNs) are state-of-the-art models for many vision tasks. We propose an approach to assess the relationship between visual tasks and their task-specific models. Our method uses Representation Similarity Analysis (RSA), which is commonly used to find a correlation between neuronal responses from brain data and models. [...]

End-to-end Generative 3D Human Shape and Pose Models and Active Human Sensing

Virtual VASC Seminar: https://cmu.zoom.us/j/249106600 Title: End-to-end Generative 3D Human Shape and Pose Models and Active Human Sensing Abstract: I will review some of our recent work in 3d human modeling, synthesis, and active vision. I will present our new, end-to-end trainable nonlinear statistical 3d human shape and pose models of different resolutions (GHUM and GHUMLite) as [...]

Telling Left from Right: Learning Spatial Correspondence Between Sight and Sound

Virtual VASC Seminar: https://cmu.zoom.us/j/92741882813?pwd=R1R0eGRaeXFHTEF2VWNwY2VIZmU5Zz09 Abstract: Self-supervised audio-visual learning aims to capture useful representations of video by leveraging correspondences between visual and audio inputs. Existing approaches have focused primarily on matching semantic information between the sensory streams. In my talk, I’ll describe a novel self-supervised task to leverage an orthogonal principle: matching spatial information in the [...]

The Topology of Learning

Zoom Virtual Meeting: https://cmu.zoom.us/j/92178295543?pwd=L2dwZU5SbDY5NzZZNzZ4ZmFUclRqQT09 Abstract: Deep Neural Networks (DNNs) have revolutionized computer vision. We now have DNNs that achieve top results in many computer vision problems, including object recognition, facial expression analysis, and semantic segmentation, to name but a few. Unfortunately, the rise in performance has come with a cost. DNNs have become so [...]

Implicit Neural Scene Representations

Virtual Zoom Seminar: https://cmu.zoom.us/j/92178295543?pwd=L2dwZU5SbDY5NzZZNzZ4ZmFUclRqQT09 Abstract How we represent signals has major implications for the algorithms we build to analyze them. Today, most signals are represented discretely: Images as grids of pixels, shapes as point clouds, audio as grids of amplitudes, etc. If images weren't pixel grids - would we be using convolutional neural networks [...]

Computational Imaging: Beyond the Limits Imposed by Lenses

Virtual VASC Seminar: https://cmu.zoom.us/j/92587238250?pwd=S0paYUVBUXozQkFTclMwRUg0MzBNZz09 Abstract: The lens has long been a central element of cameras, since its early use in the mid-nineteenth century by Niepce, Talbot, and Daguerre. The role of the lens, from the Daguerrotype to modern digital cameras, is to refract light to achieve a one-to-one mapping between a point in the scene and a point on the sensor. This effect enables the sensor to compute a particular two-dimensional (2D) [...]

Beyond ROS: Using a Data Connectivity Framework to build and run Autonomous Systems

Virtual FRC Seminar: Seminar recording: https://cmu.zoom.us/rec/share/x84qF7_q8TlIcpHoyG_DRa58O6i8aaa8hCAW_fEPxEkBGjBVPyzW_lK0YW30RfJ3?startTime=1598551489000 Passcode: qu6)ePH9 Abstract: Next-generation robotics will need more than the current ROS code in order to comply with the interoperability, security and scalability requirements for commercial deployments. This session will provide a technical overview of ROS, ROS2 and the Data Distribution Service™ (DDS) protocol for data connectivity in safety-critical cyber-physical [...]

Learning 3D Reconstruction in Function Space

Virtual VASC Seminar: https://cmu.zoom.us/j/96635002737?pwd=RkxGVlJaUTlhcDdGeVBPcnpTS015dz09 Abstract: In this talk, I will show several recent results of my group on learning neural implicit 3D representations, departing from the traditional paradigm of representing 3D shapes explicitly using voxels, point clouds or meshes. Implicit representations have a small memory footprint and allow for modeling arbitrary 3D toplogies at [...]

Scaling Probabilistically Safe Learning to Robotics

Abstract: Before learning robots can be deployed in the real world, it is critical that probabilistic guarantees can be made about the safety and performance of such systems. In recent years, safe reinforcement learning algorithms have enjoyed success in application areas with high-quality models and plentiful data, but robotics remains a challenging domain for [...]

Compositional Representations for Visual Recognition

Virtual VASC - https://cmu.zoom.us/j/99437689110?pwd=cWxuQkIwWlFFZEk0QkVDUVFiN0lTdz09 Abstract: Compositionality is the ability for a model to recognize a concept based on its parts or constituents. This ability is essential to use language effectively as there exists a very large combination of plausible objects, attributes, and actions in the world. We posit that visual recognition models should be [...]

From kinematic to energetic design and control of wearable robots for agile human locomotion

Abstract: Even with the help of modern prosthetic and orthotic (P&O) devices, lower-limb amputees and stroke survivors often struggle to walk in the home and community. Emerging powered P&O devices could actively assist patients to enable greater mobility, but these devices are currently designed to produce a small set of pre-defined motions. Finite state machines [...]

Making 3D Predictions with 2D Supervision

Abstract: Building computer vision systems that understand 3D shape are important for applications including autonomous vehicles, graphics, and VR / AR. If we assume 3D shape supervision, we can now build systems that do a reasonable job at predicting 3D shapes from images. However, 3D supervision is difficult to obtain at scale; therefore we should [...]

The World’s Tiniest Space Program

Abstract: The aerospace industry has experienced a dramatic shift over the last decade: Flying a spacecraft has gone from something only national governments and large defense contractors could afford to something a small startup can accomplish on a shoestring budget. A virtuous cycle has developed where lower costs have led to more launches and the [...]

Perceiving 3D Human-Object Spatial Arrangements from a Single Image In-the-wild

Abstract: We live in a 3D world that is dynamic—it is full of life, with inhabitants like people and animals who interact with their environment through moving their bodies. Capturing this complex world in 3D from images has a huge potential for many applications such as compelling mixed reality applications that can interact with people [...]

A future with affordable Self-driving vehicles

(Video to appear once approved) Abstract: We are on the verge of a new era in which robotics and artificial intelligence will play an important role in our daily lives. Self-driving vehicles have the potential to redefine transportation as we understand it today. Our roads will become safer and less congested, while parking spots will be repurposed as leisure [...]

Detection of Photo Manipulation with Media Forensics

Abstract: Rapid progress in machine learning, computer vision and graphics leads to successive democratization of media manipulation capabilities. While convincing photo and video manipulation used to require substantial time and skill, modern editors bring (semi-) automated tools that can be used by everyone. Some of the most recent examples include manipulation of human faces, e.g., [...]

Robotics and Biosystems

Abstract: Research at the Center for Robotics and Biosystems at Northwestern University encompasses bio-inspiration, neuromechanics, human-machine systems, and swarm robotics, among other topics. In this talk I will give an overview of some of our recent work on in-hand manipulation, robot locomotion on yielding ground, and human-robot systems. Biography: Kevin Lynch received the B.S.E. degree [...]

Advancing the State of the Art of Computer Vision for Billions of Users

Abstract: At Google, advancing the state of the art of computer vision is very impactful as there are billions of users of Google products, many of which require high-quality, artifact-free images. I will share what we learned from successfully launching core computer vision techniques for various Google products, including PhotoScan (Photos), seamless Google Street View [...]

Learning-based 6D Object Pose Estimation in Real-world Conditions

Abstract: Estimating the 6D pose, i.e., 3D rotation and 3D translation, of objects relative to the camera from a single input image has attracted great interest in the computer vision community. Recent works typically address this task by training a deep network to predict the 6D pose given an image as input. While effective on [...]

SubT Fall Update Webinar Led by CMU’s Robotics Institute faculty members Sebastian Scherer and Matt Travers, as well as OSU’s Geoff Hollinger

We invite you to meet members of the award-winning Team Explorer, the CMU DARPA Subterranean Challenge team, and learn more about this groundbreaking competition. Some of the world's top universities have entered the DARPA Subterranean Challenge, developing technologies to map, navigate, and search underground environments. Led by CMU's Robotics Institute faculty members Sebastian Scherer and Matt [...]

Deep Learning: (still) Not Robust

Abstract: One of the key limitations of deep learning is its inability to generalize to new domains. This talk studies recent attempts at increasing neural network robustness to both natural and adversarial distribution shifts. Robustness to adversarial examples, inputs crafted specifically to fool machine learning models, are arguably the most difficult type of domain shift. [...]

Drones in Public: distancing and communication with all users

Abstract: This talk will focus on the role of human-robot interaction with drones in public spaces and be focused on two individual research areas: proximal interactions in shared spaces and improved communication with both end-users and bystanders. Prior work on human-interaction with aerial robots has focused on communication from the users or about the intended direction [...]

End-to-End ‘One Networks’: Learning Regularizers for Least Squares via Deep Neural Networks

Abstract: Linear Restoration Problems (or Linear Inverse Problems) involve reconstructing images or videos from noisy measurement vectors. Notable examples include denoising, inpainting, super-resolution, compressive sensing, deblurring and frame prediction. Often, multiple such tasks should be solved simultaneously, e.g., through Regularized Least Squares, where each individual problem is underdetermined (overcomplete) with infinitely many solutions from which [...]

Data Scalability for Robot Learning

Abstract: Recent progress in robot learning has demonstrated how robots can acquire complex manipulation skills from perceptual inputs through trial and error, particularly with the use of deep neural networks. Despite these successes, the generalization and versatility of robots across environment conditions, tasks, and objects remains a major challenge. And, unfortunately, our existing algorithms and [...]

Carnegie Mellon University

Learning to Generalize beyond Training

Abstract: Generalization, i.e., the ability to adapt to novel scenarios, is the hallmark of human intelligence. While we have systems that excel at cleaning floors, playing complex games, and occasionally beating humans, they are incredibly specific in that they only perform the tasks they are trained for and are miserable at generalization. One of the [...]

Detecting Image Synthesis — Shallow and Deep

Abstract: The proliferation of synthetic media are subject to malicious usages such as disinformation campaigns, posing potential threats to media integrity and democracy. A way to combat this is developing forensics algorithms to identify manipulated media. In the beginning of the talk, I will discuss how one can train a model to detect photos manipulated [...]

Deep Learning to Distinguish Recalled but Benign Mammography Images in Breast Cancer Screening

Abstract: Breast cancer screening using the standard mammography exam currently exhibits a high false recall rate (11.6% for women in the U.S.). Only a low proportion (0.5%) of women who were recalled for additional workup were actually found to have breast cancer. As a result of the unnecessary stress and follow-up work from these false [...]

The Plenoptic Camera

Abstract: Imagine a futuristic version of Google Street View that could dial up any possible place in the world, at any possible time. Effectively, such a service would be a recording of the plenoptic function—the hypothetical function described by Adelson and Bergen that captures all light rays passing through space at all times. While the plenoptic function [...]

Photorealistic Reconstruction of Landmarks and People using Implicit Scene Representation

Abstract: Reconstructing scenes to synthesize novel views is a long standing problem in Computer Vision and Graphics. Recently, implicit scene representations have shown novel view synthesis results of unprecedented quality, like the ones of Neural Radiance Fields (NeRF), which use the weights of a multi-layer perceptron to model the volumetric density and color of a [...]

Towards Discriminative and Domain-Invariant Feature Learning

Abstract: Deep neural networks have achieved great success in various visual applications, when trained with large amounts of labeled in-domain data. However, the networks usually suffer from a heavy performance drop on the data whose distribution is quite different from the training one. Domain adaptation methods aim to deal with such performance gap caused by [...]

Learning Efficient Visual Representation on Model, Data, Label and Beyond

Abstract: Efficient deep learning is a broad concept that we aim to learn compressed deep models and develop training algorithms to improve the efficiency of model representations, data and label utilization, etc. In recent years, deep neural networks have been recognized as one of the most effective techniques for many learning tasks, also, in the [...]

Self-supervised Learning and Generalization

Abstract: Contrastive self-supervised learning is a highly effective way of learning representations that are useful for, i.e. generalise, to a wide range of downstream vision tasks and datasets. In the first part of the talk, I will present MoCHi, our recently published contrastive self-supervised learning approach (NeurIPS 2020) that is able to learn transferable representations [...]

Enabling Robots to Cooperate & Compete: Distributed Optimization & Game Theoretic Methods for Multiple Interacting Robots

Abstract: For robots to effectively operate in our world, they must master the skills of dynamic interaction. Autonomous cars must safely negotiate their trajectories with other vehicles and pedestrians as they drive to their destinations. UAVs must avoid collisions with other aircraft, as well as dynamic obstacles on the ground. Disaster response robots must coordinate [...]

Learning to see from few labels

Abstract: Computer vision systems today exhibit a rich and accurate understanding of the visual world, but increasingly rely on learning on large labeled datasets to do so. This reliance on large labeled datasets is a problem especially when one considers difficult perception tasks, or novel domains where annotations might require effort or expertise. We thus [...]

The Role of Manipulation Primitives in Building Dexterous Robotic Systems

Abstract: I will start this talk by illustrating four different perspectives that we as a community have embraced to study robotic manipulation: 1) controlling a simplified model of the mechanics of interaction with an object; 2) using haptic feedback such as force or tactile to control the interaction with an environment; 3) planning sequences or [...]

Seeing the unseen: inferring unobserved information from multi-modal data

Abstract: As humans we can never fully observe the world around us and yet we are able to build remarkably useful models of it from our limited sensory data. Machine learning problems are often required to operate in a similar setup, that is the one of inferring unobserved information from the observed one. Partial observations [...]

Design and Analysis of Open-Source Educational Haptic Devices

Abstract: The sense of touch (haptics) is an active perceptual system used from our earliest days to discover the world around us. However, formal education is not designed to take advantage of this sensory modality. As a result, very little is known about the effects of using haptics in K-12 and higher education or the [...]

Towards AI for 3D Content Creation

Abstract: 3D content is key in several domains such as architecture, film, gaming, and robotics. However, creating 3D content can be very time consuming -- the artists need to sculpt high quality 3d assets, compose them into large worlds, and bring these worlds to life by writing behaviour models that "drives" the characters around in [...]

Move over, MSE! – New probabilistic models of motion

Abstract: Data-driven character animation holds great promise for games, film, virtual avatars and social robots. A "virtual AI actor" that moves in response to intuitive, high-level input could turn 3D animators into directors, instead of requiring them to laboriously pose the character for each frame of animation, as is the case today. However, the high [...]

Understanding the Placenta: Towards an Objective Pregnancy Screening

Abstract: My research focusses on the development of a pregnancy screening tool, that will be: (i) system and user-independent; and (ii) provides a quantifi able measure of placental health. With this end, I am working towards the design of a multiparametric quantitative ultrasound (QUS) based placental tissue characterization method. The method would potentially identify the [...]

Human-Robot Interactive Collaboration & Communication

Abstract: Autonomous and anthropomorphic robots are poised to play a critical role in manufacturing, healthcare and the services industry in the near future. However, for this vision to become a reality, robots need to efficiently communicate and interact with their human partners. Rather than traditional remote controls and programming languages, adaptive and transparent techniques for [...]

Carnegie Mellon University

Robots “R” Us: 25 years of Robotics Technology Development and Commercialization at NREC

Abstract: Since its founding in 1979, the Robotics Institute (RI) at Carnegie Mellon University has been leading the world in robotics research and education. In the mid 1990s, RI created NREC as the applied R&D center within the Institute with a specific mission to apply robotics technology in an impactful way on real-world applications. In this talk, I will go over [...]

Relational Reasoning for Multi-Agent Systems

Abstract: Multi-agent interacting systems are prevalent in the world, from purely physical systems to complicated social dynamics systems. The interactions between entities / components can give rise to very complex behavior patterns at the level of both individuals and the whole system. In many real-world multi-agent interacting systems (e.g., traffic participants, mobile robots, sports players), [...]

Towards an Intelligence Architecture for Human-Robot Teaming

Abstract: Advances in autonomy are enabling intelligent robotic systems to enter human-centric environments like factories, homes and workplaces. To be effective as a teammate, we expect robots to accomplish more than performing simplistic repetitive tasks; they must perceive, reason, perform semantic tasks in a human-like way. A robot's ability to act intelligently is fundamentally tied [...]

Self-supervised learning for visual recognition

Abstract: We are interested in learning visual representations that are discriminative for semantic image understanding tasks such as object classification, detection, and segmentation in images/videos. A common approach to obtain such features is to use supervised learning. However, this requires manual annotation of images, which is costly, ambiguous, and prone to errors. In contrast, self-supervised [...]

GANs for Everyone

Abstract: The power and promise of deep generative models such as StyleGAN, CycleGAN, and GauGAN lie in their ability to synthesize endless realistic, diverse, and novel content with user controls. Unfortunately, the creation and deployment of these large-scale models demand high-performance computing platforms, large-scale annotated datasets, and sophisticated knowledge of deep learning methods. This makes [...]

Reasoning over Text in Images for VQA and Captioning

Abstract: Text in images carries essential information for multimodal reasoning, such as VQA or image captioning. To enable machines to perceive and understand scene text and reason jointly with other modalities, 1) we collect the TextCaps dataset, which requires models to read and reason over text and visual content in the image to generate image [...]

Design and control of insect-scale bees and dog-scale quadrupeds

Abstract: Enhanced robot autonomy---whether it be in the context of extended tether-free flight of a 100mg insect-scale flapping-wing micro aerial vehicle (FWMAV), or long inspection routes for a quadrupedal robot---is hindered by fundamental constraints in power and computation. With this motivation, I will discuss a few projects I have worked on to circumvent these issues in [...]

Point Cloud Registration with or without Learning

Abstract: I will be presenting two of our recent works on 3D point cloud registration: A scene flow method for non-rigid registration: I will discuss our current method to recover scene flow from point clouds. Scene flow is the three-dimensional (3D) motion field of a scene, and it provides information about the spatial arrangement [...]

Dynamical Robots via Origami-Inspired Design

Abstract: Origami-inspired engineering produces structures with high strength-to-weight ratios and simultaneously lower manufacturing complexity. This reliable, customizable, cheap fabrication and component assembly technology is ideal for robotics applications in remote, rapid deployment scenarios that require platforms to be quickly produced, reconfigured, and deployed. Unfortunately, most examples of folded robots are appropriate only for small-scale, low-load [...]

Propelling Robot Manipulation of Unknown Objects using Learned Object Centric Models

Abstract: There is a growing interest in using data-driven methods to scale up manipulation capabilities of robots for handling a large variety of objects. Many of these methods are oblivious to the notion of objects and they learn monolithic policies from the whole scene in image space. As a result, they don’t generalize well to [...]

When and Why Does Contrastive Learning Work?

Abstract: Contrastive learning organizes data by pulling together related items and pushing apart everything else. These methods have become very popular but it's still not entirely clear when and why they work. I will share two ideas from our recent work. First, I will argue that contrastive learning is really about learning to forget. Different [...]

Anticipating the Future: forecasting the dynamics in multiple levels of abstraction

Abstract: A key navigational capability for autonomous agents is to predict the future locations, actions, and behaviors of other agents in the environment. This is particularly crucial for safety in the realm of autonomous vehicles and robots. However, many current approaches to navigation and control assume perfect perception and knowledge of the environment, even though [...]

Learning to Perceive Videos for Embodiment

Abstract: Video understanding has achieved tremendous success in computer vision tasks, such as action recognition, visual tracking, and visual representation learning. Recently, this success has gradually been converted into facilitating robots and embodied agents to interact with the environments. In this talk, I am going to introduce our recent efforts on extracting self-supervisory signals and [...]

Open Challenges in Sign Language Translation & Production

Abstract: Machine translation and computer vision have greatly benefited of the advances in deep learning. The large and diverse amount of textual and visual data have been used to train neural networks whether in a supervised or self-supervised manner. Nevertheless, the convergence of the two field in sign language translation and production is still poses [...]

The Search for Ancient Life on Mars Began with a Safe Landing

Abstract: Prior mars rover missions have all landed in flat and smooth regions, but for the Mars 2020 mission, which is seeking signs of ancient life, this was no longer acceptable. To maximize the variety of rock samples that will eventually be returned to earth for analysis, the Perseverance rover needed to land in a [...]

3D Recognition with self-supervised learning and generic architectures

Abstract: Supervised learning relies on manual labeling which scales poorly with the number of tasks and data. Manual labeling is especially cumbersome for 3D recognition tasks such as detection and segmentation and thus most 3D datasets are surprisingly small compared to image or video datasets. 3D recognition methods are also fragmented based on the type [...]

Rapid Adaptation for Robot Learning

Abstract: How can we train a robot to generalize to diverse environments? This question underscores the holy grail of robot learning research because it is difficult to supervise an agent for all possible situations it can encounter in the future. We posit that the only way to guarantee such a generalization is to continually learn and [...]

Robotic Cave Exploration for Search, Science, and Survey

Abstract: Robotic cave exploration has the potential to create significant societal impact through facilitating search and rescue, in the fight against antibiotic resistance (science), and via mapping (survey). But many state-of-the-art approaches for active perception and autonomy in subterranean environments rely on disparate perceptual pipelines (e.g., pose estimation, occupancy modeling, hazard detection) that process the same underlying sensor data in [...]

Humans, hands, and horses: 3D reconstruction of articulated object categories using strong, weak, and self-supervision

Abstract: Reconstructing 3D objects from a single 2D image is a task that humans perform effortlessly, yet computer vision so far has only robustly solved 3D face reconstruction. In this talk we will see how we can extend the scope of monocular 3D reconstruction to more challenging, articulated categories such as human bodies, hands and [...]

Enabling Grounded Language Communication for Human-Robot Teaming

Abstract: The ability for robots to effectively understand natural language instructions and convey information about their observations and interactions with the physical world is highly dependent on the sophistication and fidelity of the robot’s representations of language, environment, and actions. As we progress towards more intelligent systems that perform a wider range of tasks in a [...]

Looking behind the Seen in Order to Anticipate

Abstract: Despite significant recent progress in computer vision and machine learning, personalized autonomous agents often still don’t participate robustly and safely across tasks in our environment. We think this is largely because they lack an ability to anticipate, which in turn is due to a missing understanding about what is happening behind the seen, i.e., [...]

Robots that Learn through Language

Abstract: Advances in perception have been integral to transitioning robots from machines restricted to factory automation to autonomous agents that operate robustly in unstructured environments. As our surrogates, robots enable people to explore the deepest depths of the ocean and distant regions of space, making discoveries that would otherwise be impossible. The age of robots [...]

Towards Reconstructing Any Object in 3D

Abstract: The world we live in is incredibly diverse, comprising of over 10k natural and man-made object categories. While the computer vision community has made impressive progress in classifying images from such diverse categories, the state-of-the-art 3D prediction systems are still limited to merely tens of object classes. A key reason for this stark difference [...]

The Clinician’s AI Partner: Augmenting Clinician Capabilities Across the Spectrum of Healthcare

Abstract: Clinicians often work under highly demanding conditions to deliver complex care to patients. As our aging population grows and care becomes increasingly complex, physicians and nurses are now also experiencing feelings of burnout at unprecedented levels. In this talk, I will discuss possibilities for computer vision to function as a partner to clinicians, and to augment their capabilities, across [...]

The Unusual Effectiveness of Abstractions for Assistive AI

Abstract: Can we balance efficiency and reliability while designing assistive AI systems? What would such AI systems need to provide? In this talk I will present some of our recent work addressing these questions. In particular, I will show that a few fundamental principles of abstraction are surprisingly effective in designing efficient and reliable AI [...]

Reliable and Accessible Visual Recognition

Abstract: As visual recognition models are developed across diverse applications; we need the ability to reliably deploy our systems in a variety of environments. At the same time, visual models tend to be trained and evaluated on a static set of curated and annotated data which only represents a subset of the world. In this [...]

Fake It Till You Make It: Face analysis in the wild using synthetic data alone

Abstract: In this seminar I will demonstrate how synthetic data alone can be used to perform face-related computer vision in the wild. The community has long enjoyed the benefits of synthesizing training data with graphics, but the domain gap between real and synthetic data has remained a problem, especially for human faces. Researchers have tried [...]

Robotics and Warehouse Automation at Berkshire Grey

Abstract: This talk tells the Berkshire Grey story, from its founding in 2013 to its IPO earlier this year — the first robotics IPO since iRobot over15 years ago. Berkshire Grey produces automated systems for e-commerce order fulfillment, parcel sortation, store replenishment, and related operations in warehouses, distribution centers, and in the back ends of [...]

Leveraging StyleGAN for Image Editing and Manipulation

Abstract: StyleGAN has recently been established as the state-of-the-art unconditional generator, synthesizing images of phenomenal realism and fidelity, particularly for human faces. With its rich semantic space, many works have attempted to understand and control StyleGAN’s latent representations with the goal of performing image manipulations. To perform manipulations on real images, however, one must learn to [...]

Resilient Exploration in SubT Environments: Team Explorer’s Approach and Lessons Learned in the Final Event

Abstract: Subterranean robot exploration is difficult with many mobility, communications, and navigation challenges that require an approach with a diverse set of systems, and reliable autonomy. While prior work has demonstrated partial successes in addressing the problem, here we convey a comprehensive approach to address the problem of subterranean exploration in a wide range of [...]

Next-Gen Video Communication

Abstract: Video communication connects our world. It is necessary in conducting business, educational and personal activities across different geographical locations. However, the quality of an average user’s video communication is dramatically worse than that of professionally created videos in news broadcasts, talk shows, and on YouTube. This is because professionally created videos are often captured with [...]

Activity Understanding of Scripted Performances

Abstract: The PSU Taichi for Smart Health project has been doing a deep-dive into vision-based analysis of 24-form Yang-style Taichi (TaijiQuan). A key property of Taichi, shared by martial arts katas and prearranged form exercises in other sports, is practice of a scripted routine to build both mental and physical competence. The scripted nature of routines [...]

Domain adaptive object detection

Abstract: Recent advances in deep learning have led to the development of accurate and efficient models for object detection. However, learning highly accurate models relies on the availability of large-scale annotated datasets. Due to this, model performance drops drastically when evaluated on label-scarce datasets having visually distinct images. Domain adaptation tries to mitigate this degradation. In [...]

Visual Understanding across Semantic Groups, Domains and Devices

Abstract: Deep neural networks often lack generalization capabilities to accommodate changes in the input/output domain distributions and, therefore, are inherently limited by the restricted visual and semantic information contained in the original training set. In this talk, we argue the importance of the versatility of deep neural architectures and we explore it from various perspectives. [...]

Towards Robust Human-Robot Interaction: A Quality Diversity Approach

Abstract: The growth of scale and complexity of interactions between humans and robots highlights the need for new computational methods to automatically evaluate novel algorithms and applications. Exploring the diverse scenarios of interaction between humans and robots in simulation can improve understanding of complex human-robot interaction systems and avoid potentially costly failures in real-world settings. [...]

Topology-Driven Learning for Biomedical Imaging Informatics

Abstract: Thanks to decades of technology development, we are now able to visualize in high quality complex biomedical structures such as neurons, vessels, trabeculae and breast tissues. We need innovative methods to fully exploit these structures, which encode important information about underlying biological mechanisms. In this talk, we explain how topology, i.e., connected components, handles, loops, [...]

Lessons from the Field: Deep Learning and Machine Perception for field robots

Abstract: Mobile robots now deliver vast amounts of sensor data from large unstructured environments. In attempting to process and interpret this data there are many unique challenges in bridging the gap between prerecorded data sets and the field. This talk will present recent work addressing the application of machine learning techniques to mobile robotic perception. [...]

Learning generative representations for image distributions

Abstract: Autoencoder neural networks are an unsupervised technique for learning representations, which have been used effectively in many data domains. While capable of generating data, autoencoders have been inferior to other models like Generative Adversarial Networks (GAN’s) in their ability to generate image data. We will describe a general autoencoder architecture that addresses this limitation, and [...]

Building Intelligent and Visceral Machines: From Sensing to Application

Abstract: Humans have evolved to have highly adaptive behaviors that help us survive and thrive. As AI prompts a move from computing interfaces that are explicit and procedural to those that are implicit and intelligent, we are presented with extraordinary opportunities. In this talk, I will argue that understanding affective and behavioral signals presents many opportunities [...]

GANcraft – an unsupervised 3D neural method for world-to-world translation

Abstract: Advances in 2D image-to-image translation methods, such as SPADE/GauGAN, have enabled users to paint photorealistic images by drawing simple sketches similar to those created in Microsoft Paint. Despite these innovations, creating a realistic 3D scene remains a painstaking task, out of the reach of most people. It requires years of expertise, professional software, a library [...]

Learning Optical Flow: Model, Data, and Applications

Abstract: Optical flow provides important information about the dynamic world and is of fundamental importance to many tasks. In this talk, I will present my work on different aspects of learning optical flow. I will start with the background and talk about PWC-Net, a compact and effective model built using classical principles for optical flow. Next, [...]

Distributed Dissipativity: Applying Foundational Stability Theory to Modern Networked Control

Abstract: Despite its diverse areas of application, the desire to optimize performance and guarantee acceptable behaviour in the face of inevitable uncertainty is pervasive throughout control theory. This creates a fundamental challenge since the necessity of robustly stable control schemes often favors conservative designs, while the desire to optimize performance typically demands the opposite. While [...]

Haptic Perspective-taking from Vision and Force

Abstract: Physically collaborative robots present an opportunity to positively impact society across many domains. However, robots currently lack the ability to infer how their actions physically affect people. This is especially true for robotic caregiving tasks that involve manipulating deformable cloth around the human body, such as dressing and bathing assistance. In this talk, I [...]

Do Vision-Language Pretrained Models Learn Spatiotemporal Primitive Concepts?

Abstract: Vision-language models pretrained on web-scale data have revolutionized deep learning in the last few years. They have demonstrated strong transfer learning performance on a wide range of tasks, even under the "zero-shot" setup, where text "prompts" serve as a natural interface for humans to specify a task, as opposed to collecting labeled data. These models are [...]

Perception-Action Synergy in Uncertain Environments

Abstract: Many robotic applications require a robot to operate in an environment with unknowns or uncertainty, at least initially, before it gathers enough information about the environment. In such a case, a robot must rely on sensing and perception to feel its way around. Moreover, it has to couple sensing/perception and motion synergistically in real [...]

Max-Affine Spline Insights into Deep Learning

Abstract: We build a rigorous bridge between deep networks (DNs) and approximation theory via spline functions and operators. Our key result is that a large class of DNs can be written as a composition of max-affine spline operators (MASOs) that provide a powerful portal through which we view and analyze their inner workings. For instance, [...]

Teruko Yata Memorial Lecture

Leveraging Language and Video Demonstrations for Learning Robot Manipulation Skills and Enabling Closed-Loop Task Planning Humans have gradually developed language, mastered complex motor skills, created and utilized sophisticated tools. The act of conceptualization is fundamental to these abilities because it allows humans to mentally represent, summarize and abstract diverse knowledge and skills. By means of [...]

Designing Robotic Systems with Collective Embodied Intelligence

Abstract: Natural swarms exhibit sophisticated colony-level behaviors with remarkable scalability and error tolerance. Their evolutionary success stems from more than just intelligent individuals, it hinges on their morphology, their physical interactions, and the way they shape and leverage their environment. Mound-building termites, for instance, are believed to use their own body as a template for [...]

Understanding 3D Scenes and Interacting Hands

Abstract: Abstract: The long-term goal of my research is to help computers understand the physical world from images, including both 3D properties and how humans or robots could interact with things. This talk will summarize two recent directions aimed at enabling this goal. I will begin with learning to reconstruct full 3D scenes, including [...]

Snakes & Spiders, Robots & Geometry

Abstract: Locomotion and perception are a common thread between robotics and biology. Understanding these phenomena at a mechanical level involves nonlinear dynamics and the coordination of many degrees of freedom. In this talk, I will discuss geometric approaches to organizing this information in two problem domains: Undulatory locomotion of snakes and swimmers, and vibration propagation [...]

Multimodal Modeling: Learning Beyond Visual Knowledge

Abstract: The computer vision community has embraced the success of learning specialist models by training with a fixed set of predetermined object categories, such as ImageNet or COCO. However, learning only from visual knowledge might hinder the flexibility and generality of visual models, which requires additional labeled data to specify any other visual concept and [...]

Robotic Cave Exploration for Search, Science, and Survey

Abstract: Robotic cave exploration has the potential to create significant societal impact through facilitating search and rescue, in the fight against antibiotic resistance (science), and via mapping (survey). But many state-of-the-art approaches for active perception and autonomy in subterranean environments rely on disparate perceptual pipelines (e.g., pose estimation, occupancy modeling, hazard detection) that process the same underlying sensor data in different [...]

Audio-Visual Learning for Social Telepresence

Abstract Relationships between people are strongly influenced by distance. Even with today’s technology, remote communication is limited to a two-dimensional audio-visual experience and lacks the availability of a shared, three-dimensional space in which people can interact with each other over the distance. Our mission at Reality Labs Research (RLR) in Pittsburgh is to develop such [...]

Representations in Robot Manipulation: Learning to Manipulate Ropes, Fabrics, Bags, and Liquids

Abstract: The robotics community has seen significant progress in applying machine learning for robot manipulation. However, much manipulation research focuses on rigid objects instead of highly deformable objects such as ropes, fabrics, bags, and liquids, which pose challenges due to their complex configuration spaces, dynamics, and self-occlusions. To achieve greater progress in robot manipulation of [...]

Safe and Stable Learning for Agile Robots without Reinforcement Learning

Abstract: My research group (https://aerospacerobotics.caltech.edu/) is working to systematically leverage AI and Machine Learning techniques towards achieving safe and stable autonomy of safety-critical robotic systems, such as robot swarms and autonomous flying cars. Another example is LEONARDO, the world's first bipedal robot that can walk, fly, slackline, and skateboard. Stability and safety are often research problems [...]

Towards editable indoor lighting estimation

Abstract: Combining virtual and real visual elements into a single, realistic image requires the accurate estimation of the lighting conditions of the real scene. In recent years, several approaches of increasing complexity---ranging from simple encoder-decoder architecture to more sophisticated volumetric neural rendering---have been proposed. While the quality of automatic estimates has increased, they have the unfortunate downside [...]

Computational imaging with multiply scattered photons

Abstract: Computational imaging has advanced to a point where the next significant milestone is to image in the presence of multiply-scattered light. Though traditionally treated as noise, multiply-scattered light carries information that can enable previously impossible imaging capabilities, such as imaging around corners and deep inside tissue. The combinatorial complexity of multiply-scattered light transport makes [...]

Towards $1 robots

Abstract: Robots are pretty great -- they can make some hard tasks easy, some dangerous tasks safe, or some unthinkable tasks possible. And they're just plain fun to boot. But how many robots have you interacted with recently? And where do you think that puts you compared to the rest of the world's people? In [...]

Mental models for 3D modeling and generation

Abstract: Humans have extraordinary capabilities of comprehending and reasoning about our 3D visual world. One particular reason is that when looking at an object or a scene, not only can we see the visible surface, but we can also hallucinate the invisible parts - the amodal structure, appearance, affordance, etc. We have accumulated thousands of [...]

What (else) can you do with a robotics degree?

Abstract: In 2004, half-way through my robotics Ph.D., I had a panic-inducing thought: What if I don’t want to build robots for the rest of my life? What can I do with this degree?! Nearly twenty years later, I have some answers: tackle climate change in Latin America, educate Congress about autonomous vehicles, improve how [...]

Complete Codec Telepresence

Abstract: Imagine two people, each of them within their own home, being able to communicate and interact virtually with each other as if they are both present in the same shared physical space. Enabling such an experience, i.e., building a telepresence system that is indistinguishable from reality, is one of the goals of Reality Labs [...]

R.I.P ohyay: experiences building online virtual experiences during the pandemic: what works, what hasn’t, and what we need in the future

Abstract: During the pandemic I helped design ohyay (https://ohyay.co), a creative tool for making and hosting highly customized video-based virtual events. Since Fall 2020 I have personally designed many online events: ranging from classroom activities (lectures, small group work, poster sessions, technical papers PC meetings), to conferences, to virtual offices, to holiday parties involving 100's [...]

Physics-informed image translation

Abstract: Generative Adversarial Networks (GANs) have shown remarkable performances in image translation, being able to map source input images to target domains (e.g. from male to female, day to night, etc.). However, their performances may be limited by insufficient supervision, which may be challenging to obtain. In this talk, I will present our recent works [...]

Robots Should Reduce, Reuse, and Recycle

Abstract: Despite numerous successes in deep robotic learning over the past decade, the generalization and versatility of robots across environments and tasks has remained a major challenge. This is because much of reinforcement and imitation learning research trains agents from scratch in a single or a few environments, training special-purpose policies from special-purpose datasets. In [...]

Weak Multi-modal Supervision for Object Detection and Persuasive Media

Abstract: The diversity of visual content available on the web presents new challenges and opportunities for computer vision models. In this talk, I present our work on learning object detection models from potentially noisy multi-modal data, retrieving complementary content across modalities, transferring reasoning models across dataset boundaries, and recognizing objects in non-photorealistic media. While the [...]

Machine Learning and Model Predictive Control for Adaptive Robotic Systems

Abstract: In this talk I will discuss several different ways in which ideas from machine learning and model predictive control (MPC) can be combined to build intelligent, adaptive robotic systems. I’ll begin by showing how to learn models for MPC that perform well on a given control task. Next, I’ll introduce an online learning perspective on [...]

Towards more effective remote execution of exploration operations using multimodal interfaces

Abstract: Remote robots enable humans to explore and interact with environments while keeping them safe from existing harsh conditions (e.g., in search and rescue, deep sea or planetary exploration scenarios). However, the gap between the control station and the remote robot presents several challenges (e.g., situation awareness, cognitive load, perception, latency) for effective teleoperation. Multimodal [...]

Learning Visual, Audio, and Cross-Modal Correspondences

Abstract: Today's machine perception systems rely heavily on supervision provided by humans, such as labels and natural language. I will talk about our efforts to make systems that, instead, learn from two ubiquitous sources of unlabeled data: visual motion and cross-modal sensory associations. I will begin by discussing our work on creating unified models for [...]

Multi-Sensor Robot Navigation and Subterranean Exploration

Towards a formal theory of deep optimisation

Abstract: Precise understanding of the training of deep neural networks is largely restricted to architectures such as MLPs and cost functions such as the square cost, which is insufficient to cover many practical settings. In this talk, I will argue for the necessity of a formal theory of deep optimisation. I will describe such a [...]

Towards Interactive Radiance Fields

Abstract: Over the last years, the fields of computer vision and computer graphics have increasingly converged. Using the exact same processes to model appearance during 3D reconstruction and rendering has shown tremendous benefits, especially when combined with machine learning techniques to model otherwise hard-to-capture or -simulate optical effects. In this talk, I will give an [...]

Learning Representations for Interactive Robotics

In this talk, I will be discussing the role of learning representations for robots that interact with humans and robots that interactively learn from humans through a few different vignettes. I will first discuss how bounded rationality of humans guided us towards developing learned latent action spaces for shared autonomy. It turns out this “bounded rationality” is not a [...]

Motion Planning Around Obstacles with Graphs of Convex Sets

Abstract: In this talk, I'll describe a new approach to planning that strongly leverages both continuous and discrete/combinatorial optimization. The framework is fairly general, but I will focus on a particular application of the framework to planning continuous curves around obstacles. Traditionally, these sort of motion planning problems have either been solved by trajectory optimization [...]

RE2 Robotics: from RI spinout to Acquisition

Abstract: It was July 2001. Jorgen Pedersen founded RE2 Robotics. It was supposed to be a temporary venture while he figured out his next career move. But the journey took an unexpected course. RE2 became a leading developer of mobile manipulation systems. Fast forward to 2022, RE2 Robotics exited via an acquisition to Sarcos Technology and [...]

Enabling Self-sufficient Robot Learning

Abstract: Autonomous exploration and data-efficient learning are important ingredients for helping machine learning handle the complexity and variety of real-world interactions. In this talk, I will describe methods that provide these ingredients and serve as building blocks for enabling self-sufficient robot learning. First, I will outline a family of methods that facilitate active global exploration. [...]

Understanding the Physical World from Images

If I show you a photo of a place you have never been to, you can easily imagine what you could do in that picture. Your understanding goes from the surfaces you see to the ones you know are there but cannot see, and can even include reasoning about how interaction would change the scene. [...]

How Computer Vision Helps – from Research to Scale

Abstract: Vasudevan (Vasu) Sundarababu, SVP and Head of Digital Engineering, will cover the topic: ‘How Computer Vision Helps – from Research to Scale’. During his time, Vasu will explore how Computer Vision technology can be leveraged in-market today, the key projects he is currently leading that leverage CV, and the end-to-end lifecycle of a CV initiative - [...]

Motion Matters in the Metaverse

Abstract: Abstract: In the early 1970s, Psychologists investigated biological motion perception by attaching point-lights to the joints of the human body, known as ‘point light walkers’. These early experiments showed biological motion perception to be an extreme example of sophisticated pattern analysis in the brain, capable of easily differentiating human motions with reduced motion cues. Further [...]

What do generative models know about geometry and illumination?

Abstract: Generative models can produce compelling pictures of realistic scenes. Objects are in sensible places, surfaces have rich textures, illumination effects appear accurate, and the models are controllable. These models, such as StyleGAN, can also generate semantically meaningful edits of scenes by modifying internal parameters. But do these models manipulate a purely abstract representation of the [...]

Life as a Professor Seminar

Have you ever wondered what life is like as a professor? What do professors do on a daily basis? What makes the faculty career challenging and rewarding? Maybe you have even thought about becoming a faculty member yourself? Join us on March 22nd from 2:00 - 3:30 PM, where a panel of CMU faculty will [...]

A Constructivist’s Guide to Robot Learning

Over the last decade, a variety of paradigms have sought to teach robots complex and dexterous behaviors in real-world environments. On one end of the spectrum we have nativist approaches that bake in fundamental human knowledge through physics models, simulators and knowledge graphs. While on the other end of the spectrum we have tabula-rasa approaches [...]

Robot Learning by Understanding Egocentric Videos

Abstract: True gains of machine learning in AI sub-fields such as computer vision and natural language processing have come about from the use of large-scale diverse datasets for learning. In this talk, I will discuss if and how we can leverage large-scale diverse data in the form of egocentric videos (first-person videos of humans conducting [...]

Next-Generation Robot Perception: Hierarchical Representations, Certifiable Algorithms, and Self-Supervised Learning

Spatial perception —the robot’s ability to sense and understand the surrounding environment— is a key enabler for robot navigation, manipulation, and human-robot interaction. Recent advances in perception algorithms and systems have enabled robots to create large-scale geometric maps of unknown environments and detect objects of interest. Despite these advances, a large gap still separates robot [...]

Autonomous mobility in Mars exploration: recent achievements and future prospects

Abstract: This talk will summarize key recent advances in autonomous surface and aerial mobility for Mars exploration, then discuss potential future missions and technology needs for Mars and other planetary bodies. Among recent advances, the Perseverance rover that is now operating on Mars includes new autonomous navigation capability that dramatically increases its traverse speed over [...]

Structures and Environments for Generalist Agents

Abstract: We are entering an era of highly general AI, enabled by supervised models of the Internet. However, it remains an open question how intelligence emerged in the first place, before there was an Internet to imitate. Understanding the emergence of skillful behavior, without expert data to imitate, has been a longstanding goal of reinforcement [...]

From Videos to 4D Worlds and Beyond

Abstract: Abstract: The world underlying images and videos is 3-dimensional and dynamic, i.e. 4D, with people interacting with each other, objects, and the underlying scene. Even in videos of a static scene, there is always the camera moving about in the 4D world. Accurately recovering this information is essential for building systems that can reason [...]

Mars Robots and Robotics at NASA JPL

Abstract: In this seminar I’ll discuss Mars robots, the unprecedented results we’re seeing with the latest Mars mission, and how we got here. Perseverance’s manipulation and sampling systems have collected samples from unique locations at twice the rate of any prior mission. 88% of all driving has been autonomous. This has enabled the mission to [...]

Generative and Animatable Radiance Fields

Abstract: Generating and transforming content requires both creativity and skill. Creativity defines what is being created and why, while skill answers the question of how. While creativity is believed to be abundant, skill can often be a barrier to creativity. In our team, we aim to substantially reduce this barrier. Recent Generative AI methods have simplified the problem for 2D [...]

Generative modeling: from 3D scenes to fields and manifold

Abstract: In this keynote talk, we delve into some of our progress on generative models that are able to capture the distribution of intricate and realistic 3D scenes and fields. We explore a formulation of generative modeling that optimizes latent representations for disentangling radiance fields and camera poses, enabling both unconditional and conditional generation of 3D [...]

Estimating Robustness using Proxies

ABSTRACT: This talk covers some of our recent explorations on estimating the robustness of black-box machine learning models across data subpopulations. In other words, if a trained model is uniformly accurate across different types of inputs, or if there are significant performance disparities affecting the different subpopulations. Measuring such a characteristic is fairly straightforward if [...]

Latent-NeRF for Shape-Guided Generation of 3D Shapes and Textures

Abstract: In this talk, I will focus on presenting my recent work which will be presented at CVPR in less than two months. Text-guided image generation has progressed rapidly in recent years, inspiring major breakthroughs in text-guided shape generation. Recently, it has been shown that using score distillation, one can successfully text-guide a NeRF model to [...]

Navigating to Objects in the Real World

Abstract: Semantic navigation is necessary to deploy mobile robots in uncontrolled environments like our homes, schools, and hospitals. Many learning-based approaches have been proposed in response to the lack of semantic understanding of the classical pipeline for spatial navigation, which builds a geometric map using depth sensors and plans to reach point goals. Broadly, end-to-end [...]

Going Beyond Continual Learning: Towards Organic Lifelong Learning

Abstract: Supervised learning, the harbinger of machine learning over the last decade, has had tremendous impact across application domains in recent years. However, the notion of a static trained machine learning model is becoming increasingly limiting, as these models are deployed in changing and evolving environments. Among a few related settings, continual learning has gained significant [...]

Predictive Scene Representations for Embodied Visual Search

Abstract: My research advances embodied AI by developing large-scale datasets and state-of-the-art algorithms. In my talk, I will specifically focus on the embodied visual search problem, which aims to enable intelligent search for robots and augmented reality (AR) assistants. Embodied visual search manifests as the visual navigation problem in robotics, where a mobile agent must efficiently navigate [...]

Special RI Seminar

Title: Testing, Analysis, and Specification for Robust and Reliable Robot Software Abstract: Building robust and reliable robotic software is an inherently challenging feat that requires substantial expertise across a variety of disciplines. Despite that, writing robot software has never been easier thanks to software frameworks such as ROS: At its best, ROS allows newcomers to assemble simple, [...]

Generating Beautiful Pixels

Abstract: In this talk, I will present three experiments that use low-level image statistics to generate high-resolution detailed outputs. In the first experiment, I will use 2D pixels to efficiently mine hard examples for better learning. Simply biasing ray sampling towards hard ray examples enables learning of neural fields with more accurate high-frequency detail in less [...]

Towards Reliable Computer Vision Systems

Abstract: The real world has infinite visual variation – across viewpoints, time, space, and curation. As deep visual models become ubiquitous in high-stakes applications, their ability to generalize across such variation becomes increasingly important. In this talk, I will present opportunities to improve such generalization at different stages of the ML lifecycle: first, I will [...]

Transforming Hollywood Visual Effects with Graphics and Vision

Abstract: Paul will describe his path to developing visual effects technology used in hundreds of movies, including The Matrix, Spider-Man 2, Benjamin Button, Avatar, Maleficent, Furious 7, and Blade Runner: 2049. These techniques include image-based modeling and rendering, high dynamic range imaging, image-based lighting, and high-resolution facial scanning for photoreal digital actors. Paul will also [...]

Vision without labels

Abstract: Deep learning has revolutionized all aspects of computer vision, but its successes have come from supervised learning at scale: large models trained on ever larger labeled datasets. However this reliance on labels makes these systems fragile when it comes to new scenarios or new tasks where labels are unavailable. This is in stark contrast to [...]

Learning Meets Gravity: Robots that Learn to Embrace Dynamics from Data

Abstract: Despite the incredible capabilities (speed and repeatability) of our hardware today, many robot manipulators are deliberately programmed to avoid dynamics – moving slow enough so they can adhere to quasi-static assumptions of the world. In contrast, people frequently (and subconsciously) make use of dynamic phenomena to manipulate everyday objects – from unfurling blankets, to [...]

Large Multimodal (Vision-Language) Models for Image Generation and Understanding